Built AI chatbots, agents, and assistants that “get it”

Bring unlimited knowledge to your AI applications and improve answer quality with RAG

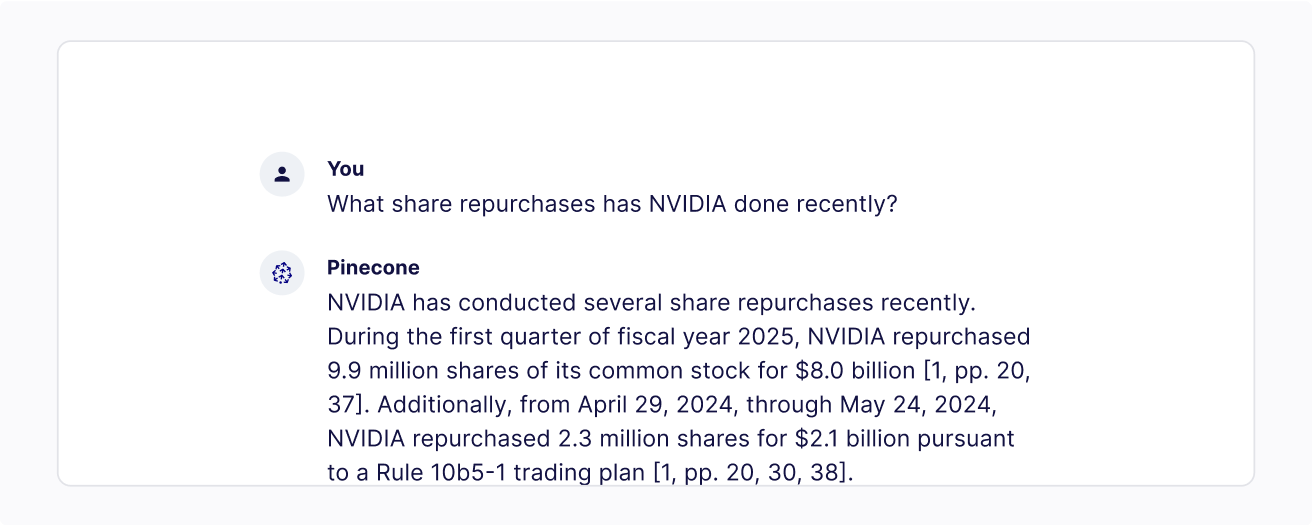

RAG with Pinecone

RAG is a framework for combining LLMs with an external vector database to generate more accurate and up-to-date responses. The Pinecone vector database lets you build RAG applications using vector search.

Reduce hallucination

Leverage domain-specific and up-to-date data at lower cost for any scale and get 50% more accurate answers with RAG.

Scale effortlessly

Supply unlimited knowledge to your AI applications with RAG without compromising performance with our serverless architecture.

Easy and reliable

Get started in a few clicks to apply RAG with enterprise-grade data security, support SLAs, and observability.

Learn how customers are using RAG with Pinecone

“We use Pinecone for vector storage and retrieval which has proved crucial for providing flexibility across different customer needs.”

Nick Gomez

Founder, Inkeep

“Pinecone serverless opened up possibilities we hadn't considered before and allows us to invest even more in our long-term product capabilities.”

Rick Vestal

Director of Engineering, DISCO

What developers can build with Pinecone

Customer service chatbots

Code generation copilot

Knowledge base Q&A

Copywriting assistant

Healthcare agents

Resources for developers

Start building knowledgeable AI now

Create your first index for free, then upgrade and pay as you go when you're ready to scale, or talk to sales.