A surprising amount of what your team knows is already written down. It's in the manual somebody put together last year, in the onboarding doc that gets passed around every time a new hire starts, in the thread where the tricky edge case finally got resolved, in the contract language your legal team has explained a hundred times. The knowledge exists. It's catalogued, stored, and searchable in theory.

But it doesn't help the person who needs it at the moment they need it.

So you answer the question. Again. And again. You find yourself reaching for the same Google Doc, pasting the same three paragraphs into Slack, saying some version of "we covered this in the handbook, let me find the section." The handbook has the answer. You have the answer. The person asking still can't get to it.

The part that's been broken

The tools that were supposed to solve this haven't. Enterprise search finds the document but leaves you to read the whole thing. Generic AI chatbots confidently make things up, or blend two policies together into an answer that sounds right but is wrong in a way you won't catch until it matters. Stuffing everything into a long context window works until the knowledge doesn't fit, or until the model can't tell which policy applies to which case, or until you realize you're spending too much burning tokens.

The other path is to build the pipeline yourself — hire the engineers, stand up retrieval, add evals, manage the refresh cycles, keep it running. Most in-house "ask our docs" projects stall out somewhere between the proof of concept and the version anyone actually uses, because the gap between retrieves relevant text and answers the question correctly turns out to be much larger than anyone expected. Plus, it keeps you from working on your core competency.

What's been missing is something in the middle: a way to put real knowledge behind an application without needing engineers to build and maintain the pipeline, and without it going off the rails the first time someone asks a question that requires more than copying a paragraph back.

Knowledge, as we use the term, is the curated, situated content your team actually operates on — policies, contracts, runbooks, tickets, the resolution to last month's weird edge case — together with the context that makes it usable: what's current, what supersedes what, which version applies in which situation. The difference between a system of record (what your data warehouse stores) and a system of knowledge (what your team actually knows). Not the model's training data. Not a pile of retrieved chunks the application has to stitch back together.

What Marketplace actually does

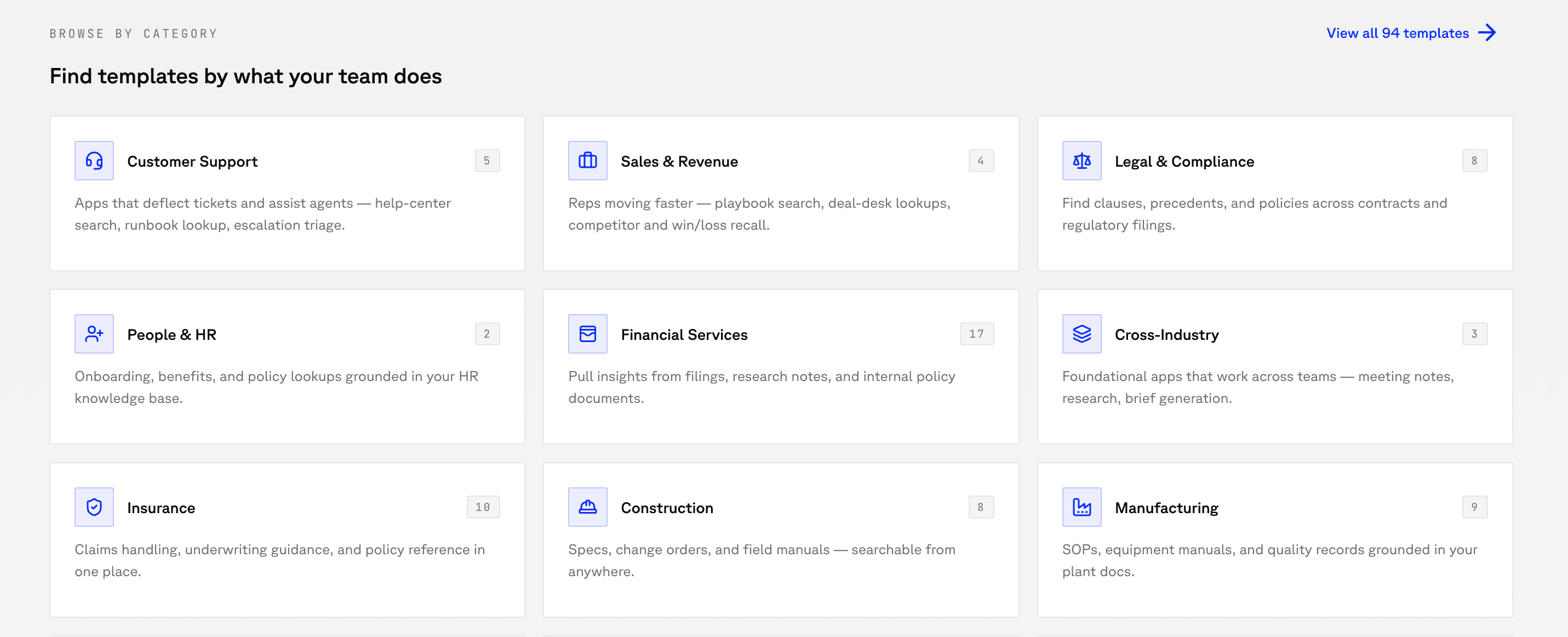

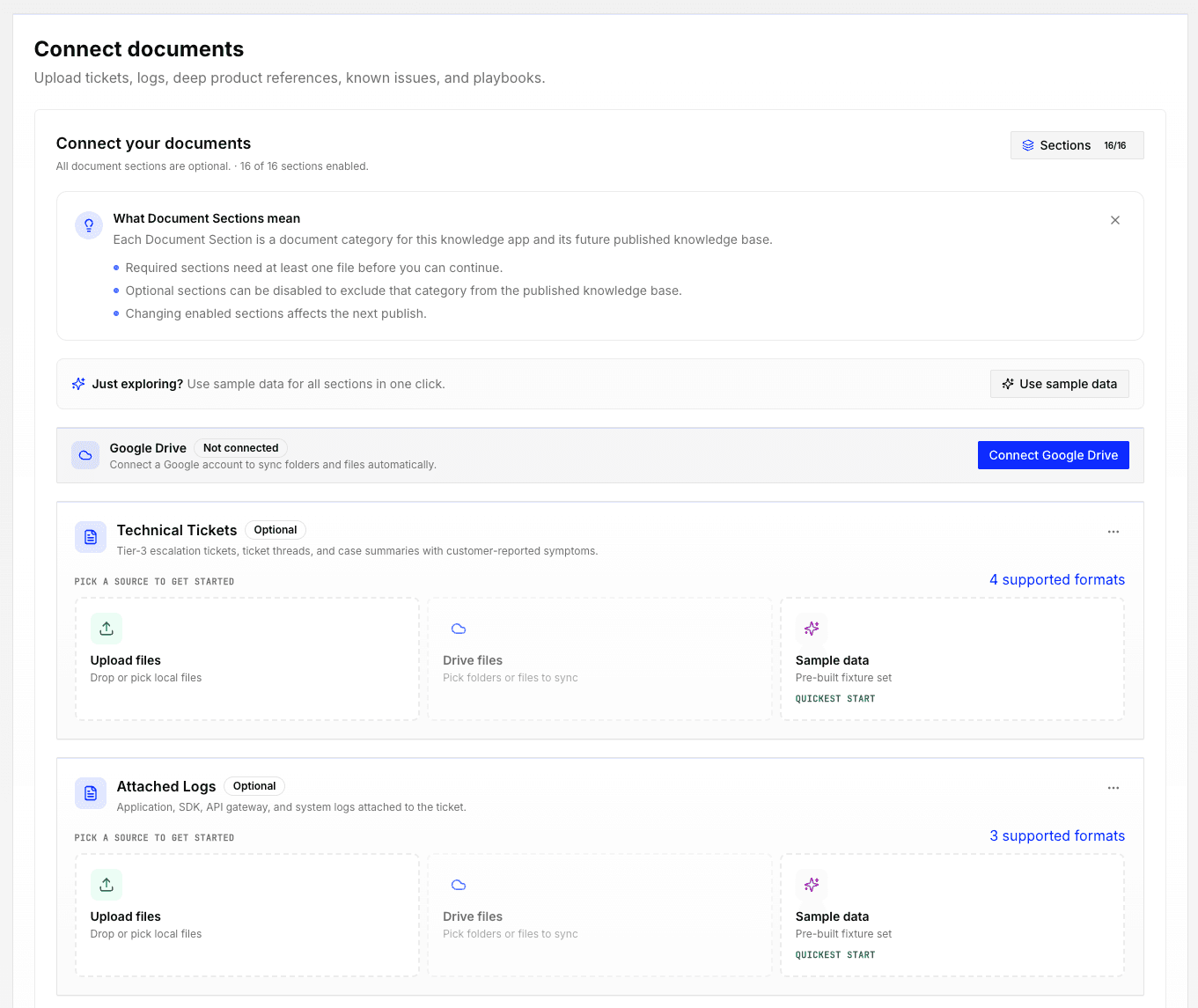

It starts with a template. Pick one for the kind of application you want — customer support, legal search, sales enablement, onboarding, or something else — and point it at the knowledge it should use. A folder in Google Drive, a stack of PDFs, a wiki, a set of tickets. Marketplace ingests everything, and a few minutes later you have something your team can start asking questions of.

Answers come with receipts. The system responds in full sentences, with citations that point back to the specific documents the answer came from. You can see what it knows — and what it doesn't, which turns out to be almost as important. When a question falls outside its scope, it says so instead of inventing an answer to be helpful. When answering a question requires pulling from more than one document — a contract clause that interacts with a policy, a support issue that touches two different product areas — it reasons through the connection instead of stitching together unrelated fragments. That stitching is the failure mode that makes most AI answers untrustworthy. (More on how that works here.)

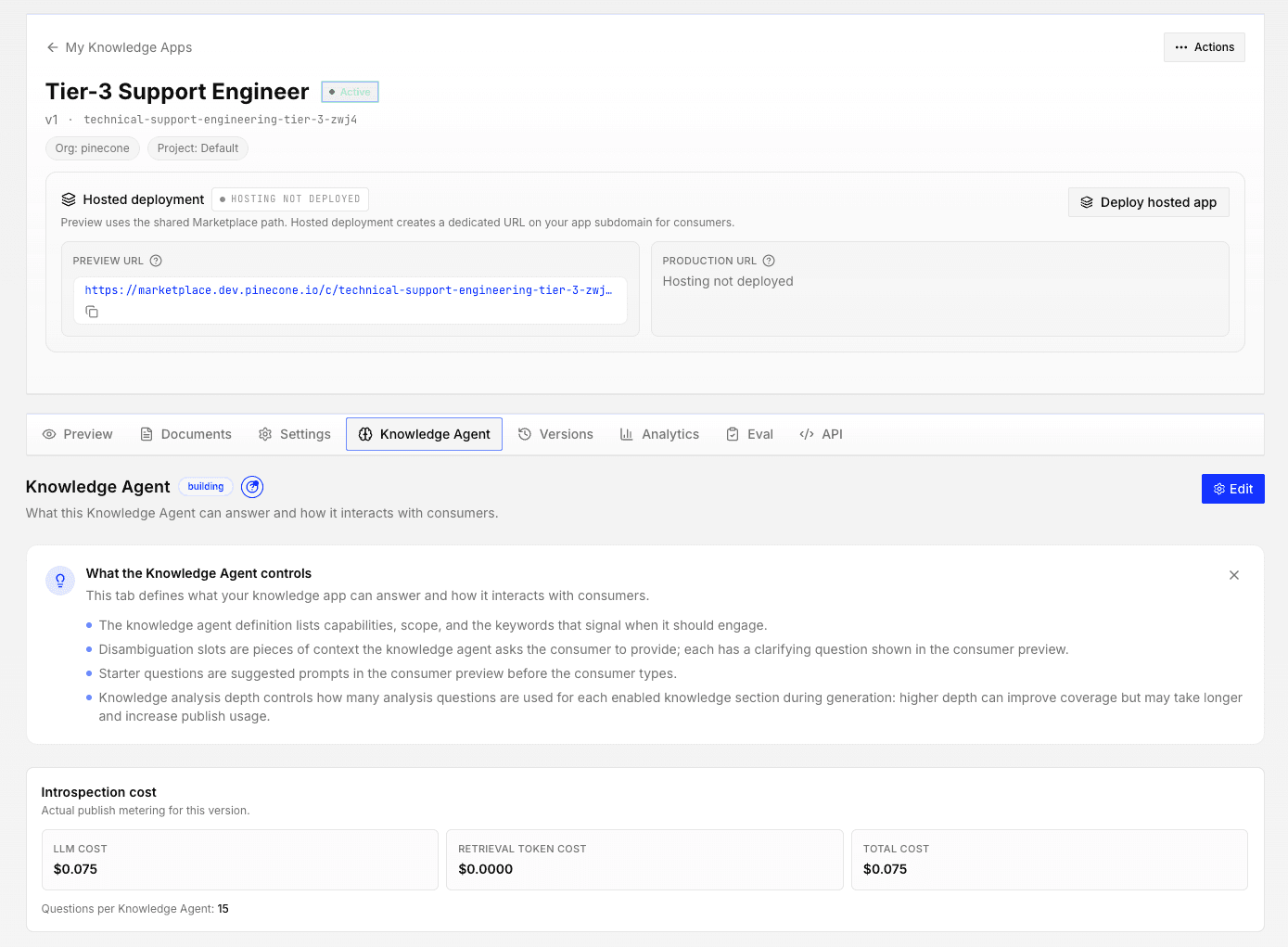

Publishing is one click. Once the application is live, your team accesses it as a web app at a URL. Gate access with single sign-on, open it to a whole department, or share it with partner outside your organization. The same knowledge layer can also serve the AI agents your company is starting to deploy — so agents answer from the same vetted sources your team does, and every improvement to the knowledge base benefits both. The work you put into Marketplace compounds over time.

Why it's different

Three things matter for the knowledge worker deciding whether to trust this with real work.

Every answer traces back to a source. Not a vague gesture at "the knowledge base," but a specific citation to a specific document. If the application tells a new hire how your refund policy works, they can click through and read the actual policy. This is what makes the answers trustworthy, and it's what makes the system useful for anything that has real consequences.

It's honest about its edges. The system knows what it knows, and it's built to say so when a question falls outside that scope. The worst failure mode in an AI tool isn't being wrong; it's being confidently wrong about something the reader can't verify. Marketplace is designed to fail loudly and truthfully, not quietly and confidently.

You don't need an engineering team to run it. This is the part that has blocked most knowledge workers from solving this problem themselves. Marketplace handles the infrastructure — the ingestion, the updating, the versioning, the scaling — so the work you do is the work you already know how to do: decide what knowledge should be in there, write the occasional clarifying note, review the questions people are asking. You stay focused on the work you do best. The system does the parts that were never your job in the first place.

Where you take it

Start small. Pick one corner of your team's knowledge that people keep asking about, stand up an application for it, see if it changes the shape of your week. If it does, the same foundation scales — to more of your team's knowledge, to more teams in your organization, to applications you share with customers and partners, and eventually to the AI agents that will be asking questions on behalf of people who haven't even joined yet.

The line between "knowledge for people" and "knowledge for agents" is thinner than it looks, and the thing you build here works on both sides of it.

Getting started

Free on Starter — 1M input tokens/month through June 30 (2x the usual) so you can test it on real workloads.

Setup is three steps and takes about as long as writing the email that announces it.

1. Pick a template. Customer support, legal search, sales enablement, onboarding, internal IT, or a blank starting point. Each template ships with a tuned prompt, a recommended schema, and defaults that match the shape of the problem.

2. Connect your sources. Google Drive folders, PDF uploads, wiki exports, ticket exports. Marketplace handles chunking, embedding, and indexing. Re-indexing on source changes is automatic.

3. Publish. One click, a branded interface, SSO-gated, shareable internally or externally. Connect to agents through the same endpoint when you're ready.

Marketplace is available now as a web application. Start at marketplace.pinecone.io.

Was this article helpful?